New Delhi: On a quiet Sunday morning, Sumeet Rathore, a tech MNC top executive, sat at the kitchen table with a cup of cold coffee and a growing sense of unease.

For the past week he had been feeling an odd pressure in his chest. It wasn’t exactly pain; more like a persistent tightness that appeared when he climbed the stairs or carried groceries. His primary care physician couldn’t see him for another three days, and the idea of spending hours waiting in an emergency department felt excessive.

So Sumeet did what millions of people now do when confronted with a medical question.

He opened his laptop and asked an AI chatbot: “Why does my chest feel tight when I exercise?”

Within seconds the program produced a calm and orderly explanation: anxiety, acid reflux, muscle strain. The list of possibilities sounded reassuringly mundane. It suggested rest, hydration, and monitoring symptoms. Sumeet closed the laptop and went about his day.

Two nights later, he was rushed to the hospital with a heart attack.

Sumeet’s story is fictional. But the scenario is increasingly plausible. Around the world, people are turning to conversational AI systems — tools such as OpenAI’s ChatGPT, Anthropic’s Claude, and Google Gemini — for answers to medical questions once exclusively reserved for doctors.

The appeal is obvious. These systems are available around the clock. They can interpret medical jargon, summarize laboratory reports, and offer explanations in seconds. For patients navigating complicated healthcare systems, the technology can feel like a lifeline. Yet medicine is not simply an exchange of information. It is a practice grounded in experience, judgment, and context… qualities that AI is still struggling to replicate.

As AI becomes an increasingly common source of health guidance, a critical question emerges: Can a machine safely stand between a patient and a medical decision?

The New ‘First Stop’

For decades the internet has served as an informal medical advisor. Patients searched symptoms on websites, often emerging with equal parts information and anxiety. Conversational AI has changed that dynamic. Instead of combing through multiple webpages, users can now type a question: Why does my head hurt when I stand up? What do these blood test numbers mean? They receive a neatly structured explanation almost instantly.

The shift has been rapid. Millions of people now ask AI chatbots about symptoms, medications, and medical conditions every day. For many, these tools have become the first place they turn when something feels wrong.

Much of the appeal comes from accessibility. Healthcare systems around the world face physician shortages and long waiting times for appointments. AI tools can provide immediate responses when patients might otherwise have none.

But doctors warn that convenience can hide important limitations. Some medical experts say diagnosis is not simply an exchange of information but an interactive process that develops through careful questioning. They note that systems like ChatGPT often miss one of a doctor’s core functions—responding to a patient’s concern by asking follow-up questions that help clarify symptoms and guide the diagnosis.

In a typical consultation, physicians ask follow-up questions that gradually narrow possible diagnoses. Without that dialogue, even accurate medical information may be incomplete.

Researchers have spent several years testing how well large language models (LLMs) perform in medical contexts, and the results are mixed. Some studies show AI systems achieving accuracy rates above 70% when evaluating clinical scenarios — an outcome that has surprised many experts and fuelled optimism about the technology’s potential.

Many other research shows much greater variability. Across certain medical tasks, average accuracy sometimes falls closer to 50-60%. In medicine, even small margins of error matter.

A US surgeon who studies AI in clinical practice, recalls a patient who arrived with a printed conversation from an AI chatbot. The chatbot had warned that a medication she had prescribed carried a dangerously high risk of pulmonary embolism. When she looked into it, she found the statistic taken from a study involving TB patients. “It didn’t apply to my patient’s case at all.”

The chatbot had located a real statistic but applied it incorrectly. This type of error is common with language models, which generate responses based on patterns in text rather than true clinical reasoning.

Despite these risks, AI health tools continue to grow in popularity.

One reason is that they can be “genuinely helpful”. Medical information is often difficult for patients to interpret. Laboratory reports contain unfamiliar abbreviations and numerical ranges, and diagnoses are frequently described using complex technical language. AI systems excel at translating complicated information into clear explanations.

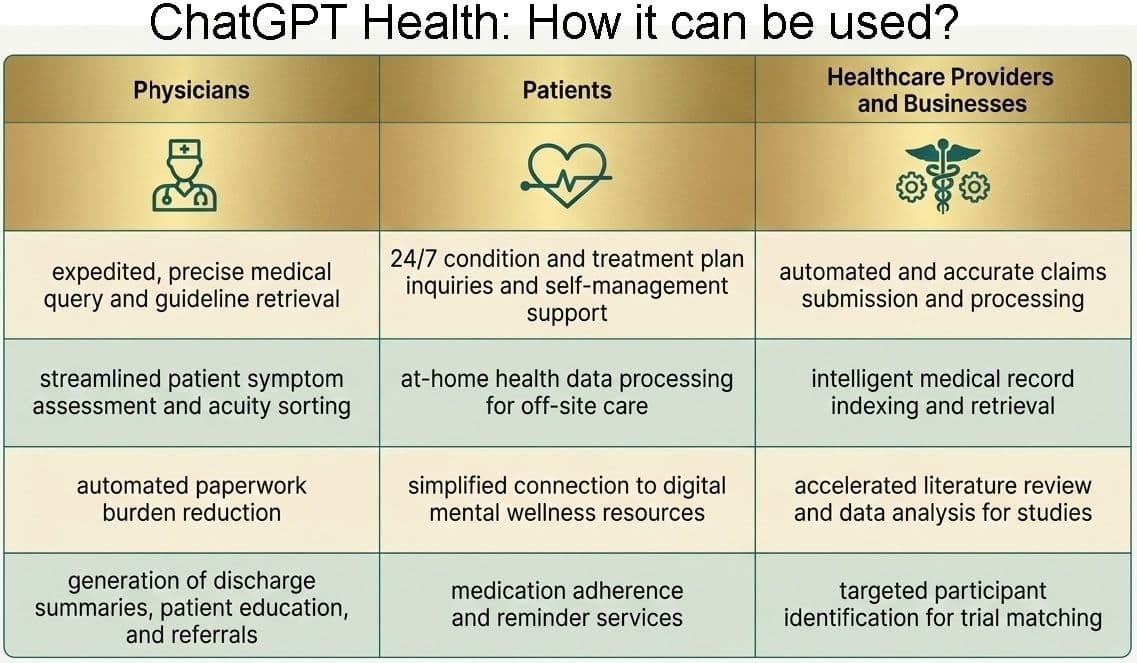

Generative AI tools are already being used to explain topics ranging from women’s sexual health to surgical procedures such as hip replacement. Some systems generate postoperative instructions, simplify medical research papers, or summarize clinical trial information.

Others attempt to personalize explanations using a patient’s own health data.

Even enthusiastic supporters stress that these tools should complement physicians rather than replace them.

Rise of ChatGPT Health

Recognizing growing demand for medical guidance, technology companies are developing specialized AI systems designed for healthcare.

One example is ChatGPT Health, an experimental tool intended to help users interpret medical data and understand their health records. According to Nate Gross, who leads the healthcare strategy at OpenAI, many ChatGPT users already ask health-related questions. Of the roughly 800 million weekly users, about one in four seeks medical information.

To improve those interactions, ChatGPT Health allows users to upload broader personal health data, including laboratory results, imaging reports, and data from wearable devices such as smartwatches. The goal is to give the system more context when explaining medical information.

Still, Gross emphasizes that the tool is not meant to diagnose disease. “We train our models specifically to guide patients to health care professionals for diagnosis and treatment,” he says. “We’re looking to give people information, not tell them if they’re sick or healthy.”

As AI health tools expand, concerns about privacy are growing. Unlike hospitals and insurers, many AI platforms are not governed by the same legal protections for patient data.

So, for now, doctors advise patients interested in experimenting with AI health tools to proceed carefully until stronger safeguards are established.

Another challenge lies in how people communicate with AI systems. Even when models possess strong medical knowledge, their responses depend heavily on the quality and completeness of information users provide.

This problem became evident in a study conducted by researchers at the Oxford Internet Institute. When researchers presented clinical cases directly to AI models — including ChatGPT-4o and Meta’s Llama 3 — the systems correctly identified the underlying condition about 95% of the time.

But when ordinary participants described the same cases to the models, accuracy dropped dramatically. The systems identified the condition correctly only about one-third of the time.

The limiting factor was the human-AI communication loop, doctors say. Users often provided incomplete information, the model misinterpreted key details, and people sometimes ignored relevant diagnostic suggestions during the conversation.

Participants also tended to underestimate the seriousness of symptoms, which could delay seeking care.

When AI Advice Goes Wrong

Such misunderstandings can have serious consequences. In simulated emergency scenarios, some AI systems advised patients with signs of stroke, respiratory distress, or suicidal thoughts to seek routine care rather than urgent treatment. Even if these cases represent a minority of responses, the potential risks are significant.

While experts remain cautious about AI as a diagnostic tool, many see promise in more focused applications. Some physicians are developing systems that translate complex medical research into language patients can understand. Online medical information, they found, often falls into two extremes: oversimplified blog posts or dense academic papers. Generative AI could bridge that gap.

There’s no denying that AI is reshaping medicine. But medical experts emphasize that AI still remains a tool, not a replacement for professional medical judgment. For now, the safest role for AI in healthcare may be the simplest one: An assistant in the conversation — not the doctor.